Friendly Fire In Information Warfare

When Truth-Defenders Accidentally Teach Relativism

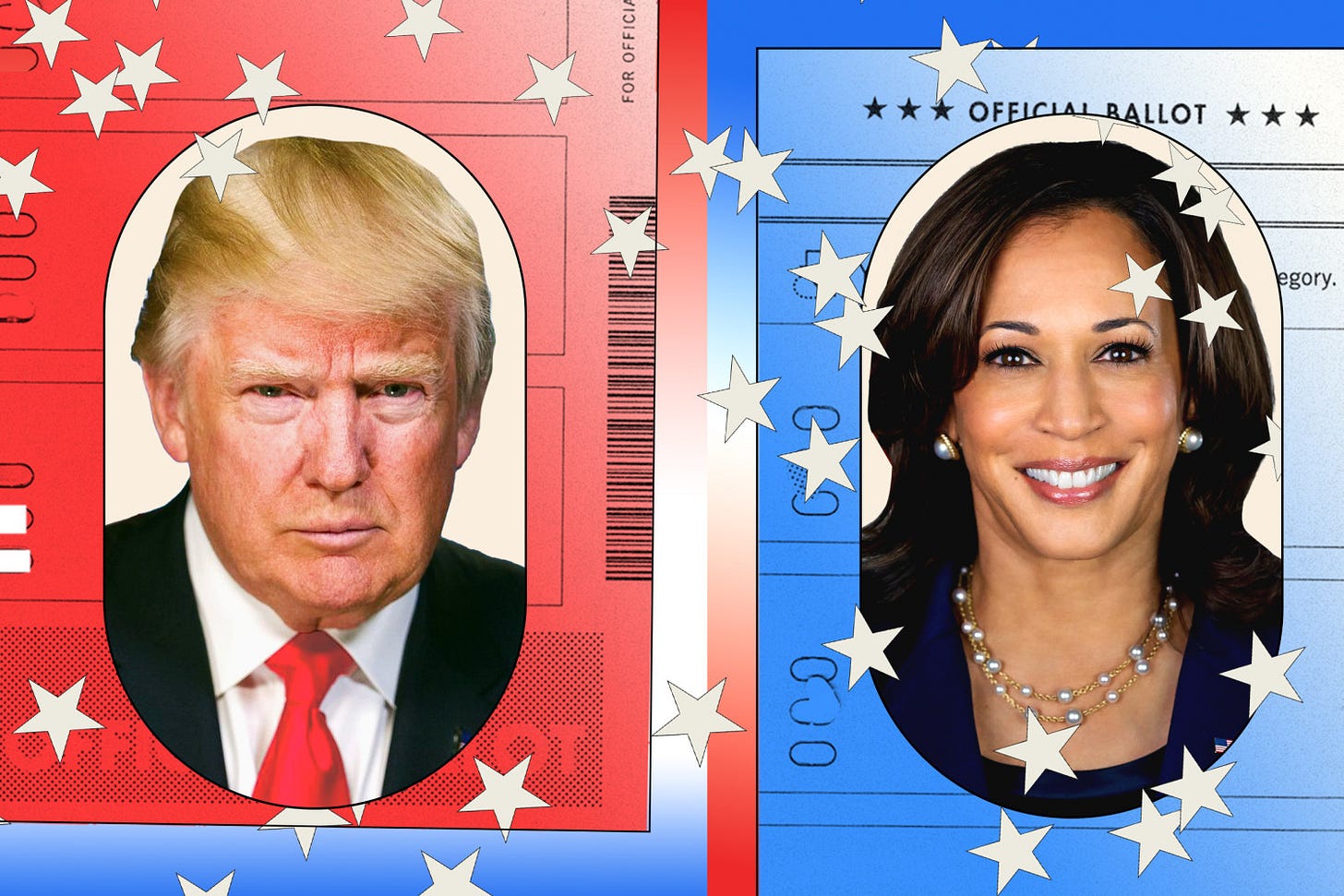

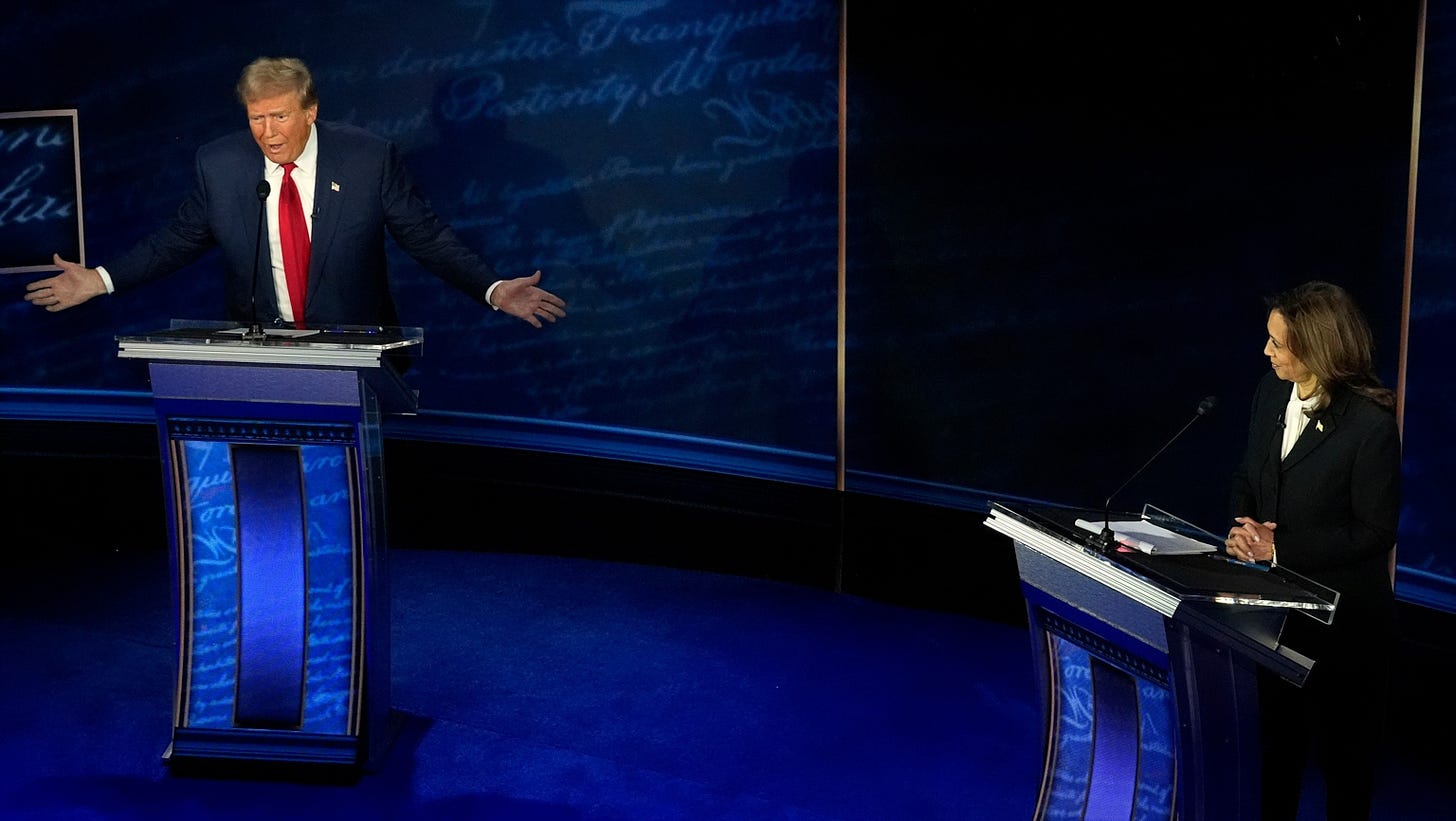

During the September 2024 Trump-Harris debate, Kamala Harris claimed Trump’s economic plan would cost middle-class families “about $4,000 more a year.”

Within hours, six major fact-checkers published their verdicts. ABC News noted estimates “vary widely from $1,700 to nearly $4,000.” CBS called it “a high-end estimate from a liberal think tank.” CNN emphasised “the precise impact is hard to predict.” NPR clarified it wasn’t a sales tax but proposed tariffs.

Every fact-checker was being rigorous.

Each added valuable context.

And none were lying.

But voters watching this unfold learned something dangerous: even people whose job is determining the truth can’t agree on what’s true.

I would like to introduce this as a concept known as “friendly fire in the information war.”

Not because truth-defenders attack each other. They don’t.

Because in competing to be most accurate, most contextual, most rigorous, they accidentally demonstrate that accuracy and rigor look indistinguishable from partisan positioning.

Each outlet becomes a mirror, and audiences choose their reflection.

The Mechanism: How Correction Becomes Noise

For years, fact-checkers feared the “backfire effect,” which is the idea that correcting false beliefs makes people believe them more strongly. Multiple research teams tried to replicate the original finding and failed.

The real problem isn’t that corrections make things worse. Corrections work initially, but their effects decay quickly and get overwhelmed by ongoing flows of contradictory information.

A single correction nudges people toward accuracy. But when someone encounters five more corrections that week, each emphasising different aspects of the “same” issue, the cumulative effect isn’t enlightenment.

It’s noise.

Why does this happen? I theorise that there are three interacting forces.

Outlets need distinct angles to stand out.

Platform algorithms amplify novel takes over settled ones. Facebook and X promote engagement, not consensus.

Institutional fear of appearing partisan makes fact-checkers hyper-calibrate their language, creating subtle variations that read as fundamental disagreement.

When shown high scientific consensus such as “97% of experts agreeing,” about a quarter of people become less convinced, interpreting consensus as evidence of collusion rather than convergent truth.

So... it’s either show unity, and some suspect conspiracy or show disagreement, and everyone suspects ignorance.

Narrative warfare isn’t about information as it’s about the meaning of information. Every correction contains an act of repetition. To say “X is false” requires articulating X. Psycholinguistic research shows this effect depends on exposure frequency and emotional salience. Therefore the pattern holds as negation tends to reinforce the claim it challenges.

When one fact-checker says “No, it’s not X, it’s Y” and another says “No, it’s not X, it’s Z,” audiences don’t hear methodological disagreement. They hear fundamental disagreement about reality.

Russia’s “War on Fakes” operation publishes so many contradictory fact-checks they sometimes refute each other, deliberately oversaturating the information environment.

They’re not trying to make people believe lies. They’re trying to make people stop believing in truth.

And then actual truth-defenders accidentally help by publishing multiple fact-checks with six different ratings for the same underlying claim.

Humans don’t store facts as propositions that can be updated with new data. Cognitive science from Jerome Bruner to Daniel Kahneman shows we store information as stories that signal trust. That means the fight for truth is the fight for trust, not for facts themselves. When multiple trusted sources tell incompatible stories about the same event, trust fractures faster than any single lie could break it.

One in four fact-checked U.S. political claims gets repeated by politicians anyway, often with variations requiring slightly different corrections. Vaccine hesitancy persists despite overwhelming medical consensus. Election denial becomes economically sustainable as an identity market. Policy consensus erodes because agreed-upon facts no longer exist to anchor debate.

The Coordination Trap

The obvious solution is that fact-checkers should coordinate.

They should agree on standards and unify their messaging.

Except Meta ended its U.S. fact-checking partnerships in early 2024, switching to community notes. Why? Because coordinated fact-checking started looking like centralised truth control. Organisations critical of government digital policy efforts, like the Foundation for Freedom Online, now frame counter-disinformation funding as a “censorship empire,” portraying researchers as partisan actors coordinating to suppress speech.

The very solution to friendly fire must be coordination.

If we don’t coordinate, truth-defenders create the problem through fragmentation.

However, if we do coordinate, they confirm suspicions of centralised control.

It’s not hopeless. During Ghana’s December 2024 elections, a fact-checking coalition made up of multiple organisations collaborating through coordinated situation rooms successfully tracked and debunked disinformation.

What made it work? They showed their process. They explained why multiple organisations checking the same claims increased confidence rather than suggested disagreement. They built the meta-narrative making collaboration look like rigor instead of collusion.

None require suppressing legitimate disagreement. They require coordination around core facts while being transparent about methodological variations.

Methodological pluralism works without creating epistemic chaos, but only with frameworks helping audiences distinguish “emphasising different aspects” from “fundamentally disagreeing about what’s true.”

What’s at Stake

During COVID-19, trust in scientific institutions declined not from scientific disagreement itself, but from how those disagreements were presented. With each faction claiming to represent THE science rather than one legitimate perspective among several.

When FactCheck.org emphasises “10 million border encounters” and CNN emphasises “encounters aren’t admissions” and NPR explains the difference between encounters and actual admissions, that’s not three different truths. That’s three levels of granularity on the same truth. Without a meta-narrative explaining that precision isn’t disagreement, it reads as: even experts can’t get their story straight.

And when experts are perceived as incoherent, trust becomes a fallacy.

Open democracies depend on participatory consent, which depends on citizens investing in a sustainable shared narrative about reality. When institutions tasked with maintaining that shared reality, made up of journalists, fact-checkers, and scientists should allign.

Democracy can’t function on “everyone’s got their own truth.” It needs foundational claims transcending partisan interpretation. Why? Elections have outcomes, vaccines have effects, climate has measurements. When friendly fire makes even those foundational claims look framework-dependent, the epistemic foundation making democracy possible gets demolished.

Some major news organisations now strategically choose silence over real-time fact-checking during live events. Not from cowardice but from experience showing immediate fragmented corrections damage credibility more than careful restraint.

That shouldn’t be necessary.

But it is.

The Paradox We Can’t Escape

Transparent pluralism, which is showing the work, explaining differences, maintaining clear agreement on core facts, only functions when audiences have sufficient media literacy and institutional trust to understand it.

Right now, that is kind of scarce.

The tactics that should work but can’t work yet because adversaries who understand that confused allies are more valuable than defeated enemies.

If truth is both cognitive and communal, defending it requires not unanimity of message but consistency of epistemic posture. The friendly fire happens when well-meaning rigor accidentally demonstrates what adversaries claim: that truth is power wearing different masks, and fact-checking is narrative control with footnotes.

But there is a trap with this. If we build the coordination infrastructure that prevents fragmentation, we risk confirming fears of centralised truth ministries. If we don’t build it, we guarantee the fragmentation that teaches relativism.

One path preserves institutional legitimacy while surrendering epistemic coherence. The other rescues coherence while sacrificing legitimacy. Neither works alone. Both are necessary. That’s not a paradox with a clever solution. It’s a paradox that has to be managed, indefinitely, by people who understand they’re walking a blade’s edge between chaos and control.

The war isn’t won by who’s most accurate. Accuracy alone doesn’t secure belief. The war is won by whoever convinces the public that accuracy is achievable, that institutions seeking it deserve trust, and that the alternative to imperfect truth-seeking is worse than the imperfect truth we manage to find.

We’re not there yet.

And the distance between here and there keeps growing.